108

Quotations

"The p-value was never intended to be a substitute for scientific reasoning."

Forsooth

"There are 33 percent more such women in their 20s than men. To help us see what a big difference 33 percent is, Birger invites us to imagine a late-night dorm room hangout that’s drawing to an end, and everyone wants to hook up. 'Now imagine,' he writes, that in this dorm room, 'there are three women and two men.'"

Cited in Imagine 33 percent at Jordan Ellenberg's "Quomodocumque" blog (20 November 2015).

Submitted by Priscilla Bremser

“I showed all the data together, which helped disguise the bimodal distribution. Nothing wrong with that. All the data is there. Every piece.... [But then he suggested using] thick and thin lines to try and dress it up, or changing colors to divert attention.”

Quoted in: Takata emails show brash exchanges about data tampering, New York Times, 4 January 2016

Submitted by Bill Peterson

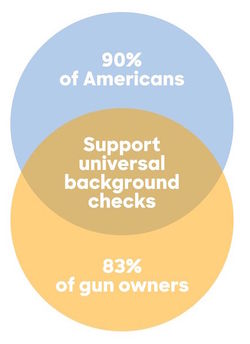

Cited in Hillary Clinton's campaign just released the worst Venn diagram of all time, Vox.com, 21 May 2016.

Suggested by Lucy Nussbaum

Simulating the lottery

Here’s $100. Can you win $1.5 billion at Powerball?

by Jan Schleuss, Los Angeles Times, 8 January 2016 (updated 12 Jan 2016)

By Wednesday January 13 of this year, the Powerball jackpot had reached $1.6 billion, making it the largest lottery jackpot in history. There were three winners that day. This article, published the week before as the prize was still growing, was one of many examples in the popular press intended to inform people how remote the chance of winning (1 in 292,201,338) really was. It includes an online tool that allows the user to select their lucky numbers, and then observe their fate over the course of 50 simulated drawings, which is $100 worth of plays. Here's the message I got after one try. No jackpot, but my minor prizes were automatically invested in more tickets.

You've played the lottery 54 times over about 6 months and spent $108, but won $8. You're in the hole $100. So why not throw some more money at that problem? [The options offered are to bet $100, $1000, or your whole paycheck.]

By contrast, the New York Times tried to explain the hopelessness in prose [1] (NYT, 12 January 2016). The article lists some time-honored comparisons: the 1 in 1.19 million chance that a US resident will be hit by lightning in a year and the 1 in 12,500 chance that an amateur golfer will make a hole-in-one.

For a fresher perspective, see Ron Wasserstein's excellent piece A statistician’s view: What are your chances of winning the Powerball lottery? (this originally appeared in the Huffington Post on 16 May 2013, and was updated 7 January 2016). Wasserstein calculates that if you took a dollar bill for each possible combination and laid them end to end, you could make a generous roundtrip tour from the east coast to the west coast--- and traverse it twice. Now he asks us to imagine trying to pick just one lucky dollar bill from the trip!

Followup

More than half of Powerball tickets sold this time will be duplicates

by Matt Rocheleau, Boston Globe, 13 January 2016.

This article appeared on the morning of the drawing. It noted that roughly 440 million tickets had been sold the previous Saturday. This is more than enough to cover all 292 million possible number combinations. However, according to lottery officials, only about 227 million of these combinations had actually been played, representing only 77.8% of the total.

The Globe solicited input from a number of professional statisticians to explain this phenomenon. Professor Timothy Norfolk of the University of Akron pointed out that people often play birthdays and other "lucky numbers" rather that choosing numbers at random, and tend to avoid certain patterns. Of course, many other players opt for machine-generated quick-picks. But even random selections would not be expected to cover all possible combinations. Norfolk added, “For example, if you roll a dice six times, you’re fairly unlikely to see it roll a different number each of the six times.”

The Globe avoided presenting any further calculations, but the required formulas are familiar from probability theory. In r independent rolls of a fair n-sided die, the probability that a particular face appears at least once is {1 - [(n - 1)/n]^r }, so the expected number of different faces that appear is given by

n * {1 - [(n - 1)/n]^r }

Taking n = 6 and r = 6 we see that in 6 rolls of an ordinary die, the expected number of different faces to appear is 6*(11/36) = 3.99, which is about 2/3 of the total possible. For the lottery example, taking n = 440 million and r = 292.2 million gives 227.4 million expected combinations, which is strikingly close to what was observed!

Submitted by Bill Peterson

Middle-age white mortality

Contemplating American mortality, again

The Economist, Daily Chart, 29 January 2016

Age-aggregation bias in mortality trends

Ice cream and IQ

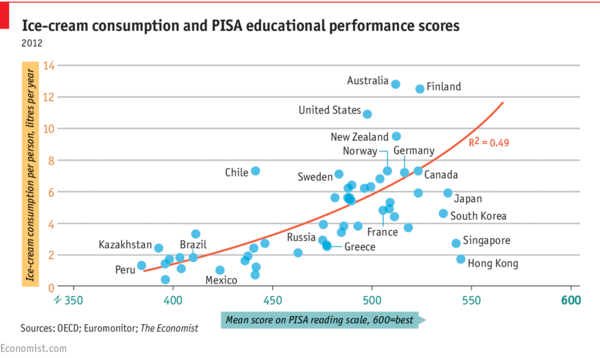

Daily Chart: Ice Cream and IQ

The Economist, 1 April 2016

The chart (reproduced below) shows per capita ice cream consumption for a sample of countries plotted against mean PISA (Programme for International Student Assessment) reading scores. A positive association (R^2 = 0.49) is revealed.

But note the date! From the comments section, you can see that not everyone immediately recognized the joke. However, several readers did point out that both variables are plausibly responses to income.

ASA issues statement on p-values

The ASA's statement on p-values: Context, process, and purpose

by Ronald L. Wasserstein and Nicole A. Lazar, The American Statistician, 70:2 (2016).

The report, based on contributions from 26 experts, enunciates six main principles, with discussion of each. The principles are reproduced below.

- P-values can indicate how incompatible the data are with a specified statistical model.

- P-values do not measure the probability that the studied hypothesis is true, or the probability that the data were produced by random chance alone.

- Scientific conclusions and business or policy decisions should not be based only onwhether a p-value passes a specific threshold.

- Proper inference requires full reporting and transparency.

- A p-value, or statistical significance, does not measure the size of an effect or the importance of a result.

- By itself, a p-value does not provide a good measure of evidence regarding a model or hypothesis.

Violations of the second principle are common in the popular press (and even appear in published research papers), and are often presented in the Forsooth section this wiki (here is one from Chance News 105).

Even explaining the issue can be tricky. When Nature News reported that the journal Basic and Applied Social Psychology would no longer publish papers presenting P-values, they had to issue the following correction:

Clarified: This story originally asserted that “The closer to zero the P value gets, the greater the chance the null hypothesis is false.” P values do not give the probability that a null hypothesis is false, they give the probability of obtaining data at least as extreme as those observed, if the null hypothesis was true. It is by convention that smaller P values are interpreted as stronger evidence that the null hypothesis is false. The text has been changed to reflect this.

A FiveThirtyEight blogpost provided numerous examples suggesting that Not even scientists can easily explain P-values.

In another post, FiveThirtyEight provided interactive demonstration of P-value hacking, entitled Hack Your Way To Scientific Glory. A scatterplot shows the strength of the economy vs. the degree of political power of your preferred party (choose Democrat or Republican). Your mission is to show that having your party "in control" of the government helps "the economy" by tweaking how the variables are defined.

Reproducibility crisis in psychology. Andrew Gelman, No, this post is not 30 days early: Psychological Science backs away from null hypothesis significance testing

John Oliver on scientific studies

Margaret Cibes sent a link to the following:

- John Oliver’s rant about science reporting should be taken seriously

- by John Timmer, arstechnica.com, 10 May 2016

https://www.youtube.com/watch?v=0Rnq1NpHdmw

spurious nutritional correlations from FiveThirtyEight.

We can also count on XKCD to pick up on such controversy: see Trouble for science.

Predicting recidivism

Machine bias: There’s software used across the country to predict future criminals. And it’s biased against blacks.

by Julia Angwin, Jeff Larson, Surya Mattu and Lauren Kirchner, ProPublica, 23 May 2016

On the Isolated Statisticians list, Jo Hardin cited Episode 17 of the Not so standard deviations podcast, by Hilary Parker and Roger Peng. It includes discussion of the above study (note: the link to ProPublica from the podcast page appears to be broken, but the above is correct).

Reproduced below is a table from the article is a table

| White | African American | |

|---|---|---|

| Labeled higher risk, but didn't re-offend | 23.5% | 44.9% |

| Labeled lower risk, yet did re-offend | 47.7% | 28.0% |

Also provide a link to the data on GitHub.

Exit polls

Margaret Cibes sent a link to the following: How exit polls work, explained

Exit polls, and why the primary was not stolen from Bernie Sanders

by Nate Cohn, "Upshot" blog, New York Times, 27 June 2016